EU AI Act Compliance: What Organizations Must Do Now

Learn EU AI Act compliance requirements, including risk categories, governance, and key actions organizations must take to meet regulatory standards.

Sofia Nabiha Herradi, CISM, CISA, CIPP/E, CIPP/US, CMMC-CCP (AI Governance & Compliance Consultant)

4/14/20263 min read

The EU AI Act is the world’s first comprehensive regulatory framework governing artificial intelligence. It introduces strict requirements for organizations that develop, deploy, or use AI systems, especially those impacting individuals, critical infrastructure, or decision-making processes.

As enforcement timelines begin to take shape, organizations must move from awareness to structured compliance programs.

WHAT IS THE EU AI ACT?

The EU AI Act is the world’s first comprehensive regulatory framework governing artificial intelligence. It establishes a risk-based approach to ensure that AI systems are developed and used in a safe, transparent, and accountable manner.

Under this framework, AI systems are classified into four categories based on their potential impact on individuals and society.

Unacceptable Risk systems are prohibited entirely, including applications such as social scoring or manipulative AI that can harm individuals or undermine fundamental rights.

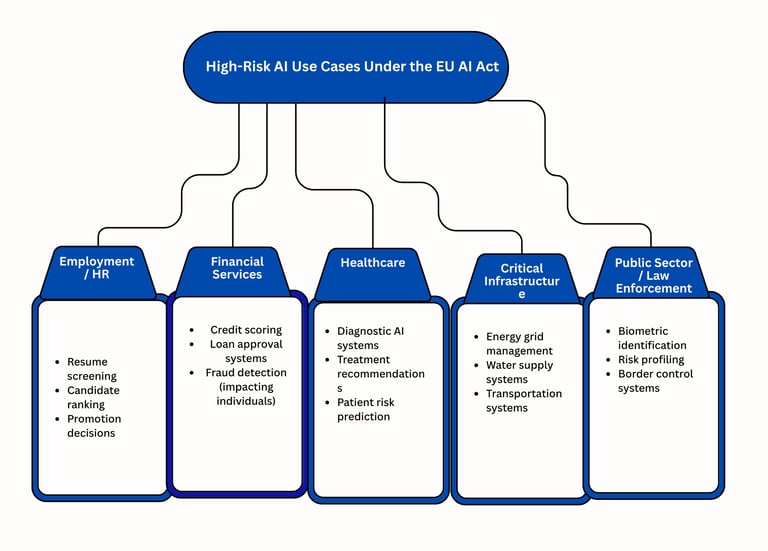

High-Risk systems are subject to strict regulatory requirements due to their potential impact on critical areas such as employment, healthcare, financial services, and essential infrastructure.

Limited Risk systems must meet transparency obligations, such as informing users when they are interacting with AI.

Minimal Risk systems are not subject to specific obligations under the Act but must still comply with general legal requirements.

Organizations may fall into the high-risk category depending on how AI is used, particularly in regulated or sensitive domains such as hiring decisions, financial services, healthcare, and critical infrastructure.

In addition, the Act introduces specific obligations for General Purpose AI (GPAI) models, including requirements related to transparency, documentation, and risk management, especially for models with systemic impact.

KEY COMPLIANCE REQUIREMENTS

To comply with the EU AI Act, organizations must implement a structured governance framework across the AI lifecycle:

1. Risk Management System

Organizations must establish a continuous risk management process that identifies, assesses, and mitigates risks associated with AI systems. This includes evaluating risks before deployment and continuously monitoring system performance in production.

2. Data Governance and Quality

AI systems must be trained and tested using high-quality data. Organizations are required to ensure that datasets are relevant, accurate, representative, and free from bias where possible. Data lineage and traceability must also be maintained.

3. Technical Documentation

Organizations must maintain detailed documentation that demonstrates how the AI system operates, including its design, intended purpose, and limitations. This documentation must be sufficient to support regulatory review and conformity assessments.

4. Transparency and Human Oversight

Users must be clearly informed when interacting with AI systems. Additionally, organizations must implement mechanisms to ensure meaningful human oversight, particularly for systems that impact critical decisions.

5. Accuracy, Security, and Robustness

AI systems must achieve appropriate levels of accuracy and reliability. They must also be resilient against errors, manipulation, and cybersecurity threats, ensuring consistent and safe operation.

6. Enforcement and Penalties

Non-compliance with the EU AI Act can result in significant penalties, including fines of up to €35 million or 7% of global annual turnover, depending on the severity of the violation.

WHY THIS MATTERS NOW

Non-compliance can lead to significant penalties and operational restrictions.

But beyond fines, the real impact is:

Loss of customer trust

Reputational damage

Delays in product deployment

Organizations that act early gain a competitive advantage by building trusted, compliant AI systems.

HOW TO PREPARE

A practical approach includes:

Conducting an AI inventory and classification

Mapping systems to EU AI Act risk categories

Performing gap assessments against regulatory requirements

Establishing governance aligned with ISO/IEC 42001

Integrating AI compliance into existing GRC programs

FINAL THOUGHT

The EU AI Act is not just a regulatory requirement—it is a shift toward responsible and accountable AI.

Organizations that embed governance early will not only meet compliance obligations but also build trust, resilience, and long-term value.

Need help preparing for EU AI Act compliance?

We help organizations design and implement AI governance programs aligned with global regulatory standards.

Contact

AI Governance Advisory

Governance, Risk & Compliance for AI Systems

Service of Insight IT Security & Compliance

Serving clients across the U.S. and globally

Email: info@cyberdsc.com